Voice personas and placeonas are key in the design and development of voice user interfaces.

The rise of voice devices, such as Amazon’s Echo and the Echo Dot, has ushered in a new era of interaction. The demand for quick information and immediate service has increased—user needs can now be accomplished through a simple voice request.

I recall sitting at a coffee shop in downtown San Francisco, awaiting the arrival of my rideshare service. As my rideshare app began to crash, immediate panic followed. “No worries,” I reassured myself. “Google, call an Uber.” Seconds later, a confirmation pop-up alerted me of my ride.

Designing for voice devices shares similarities with the process of designing for ‘screen-first’ devices, like an iPhone or tablet. The first question a designer has to ask themselves is, “Who am I designing for?”

UX designers create a persona—a term used in user-centered design and marketing to describe a fictional character created to represent a user type that might use a site, brand, or product in a certain way. For ‘screen-first’ designers, these are called “user personas.” So, what do we call user personas that interact with devices through their voice?

You guessed it: Voice personas.

This guide will explain what voice personas are, how they are created, and how they fit into the voice design process. This post will also cover the concept of “placeonas”—places and situations where a user would interact with a product using voice, i.e. while riding a bike.

We’ve divided this guide into the following sections:

- What are voice personas?

- What are placeonas?

- How to create conversations with voice devices

- The importance of voice personas

Let’s go!

1. What are voice personas?

Siri, Cortana, OK Google, Amazon Echo—just to name a few voice-enabled devices—are here to stay! The first question people ask is, “Will they eventually take over “on-screen” interaction?” The quick and logical answer is no; however, the need for on-screen interaction is becoming less vital. As mentioned in the beginning of this post, voice requests are increasingly becoming the norm. More common now than ever, strangers are awkwardly talking to themselves. A few years from now, if you find someone yelling “Someone please get me a coffee,” at a grocery store—take it literally. Don’t be surprised if a barista shows up ten minutes later with a cup of joe.

A voice UX designer, or voice interaction designer, is expected to create a persona of who will interact with the product. In the example above, the avid coffee drinker at the grocery store might be someone of a certain age group, or in a rush, or someone who probably needs to get caffeinated before a long car ride home. This is where you would begin the process of creating a “voice persona.” Another term for a voice persona is a target audience—in other words, who you are aiming to advertise and sell your product to.

Ultimately, just like a UX designer for interfaces, a voice designer creates a voice persona so they can solve a user problem. With a carefully crafted voice persona, you have a good idea of how the user will interact with your product.

Let’s say you’re designing a voice persona for a police officer looking for directions to a particular destination. You can make certain assumptions regarding user needs. Firstly, you would deduce that this interaction should be quick and immediate.The user—in this case, a police officer—should be able to accomplish the task within 1-2 sentences or interactions based on the gravity of the situation.

What if you were designing for a stay-at-home-Dad who is trying to cook a meal for his kids? The interaction between the Dad and the device may take at least 4-5 sentences. The Dad might ask for a few suggestions for dinner before demanding a specific need right away. Also, unlike the police officer, this interaction could take at least 5-10 minutes.

Just like designing user personas for on-screen products, you should narrow your voice persona to three to four fictional users. This makes the process of figuring out pain points, frustrations, and user needs much easier.

2. What are placeonas?

Bill Buxton, Principal Researcher at Microsoft Research, coined the term “placeonas.” In order to anticipate the issues or problems a voice-enabled device might need to solve for users, he began designing scenarios. He wanted to show how a user’s location limits the types of interaction a user can complete when interacting with a device.

For example, while driving, you are at a disadvantage. You can stare at your smartphone showing a map of your journey—but only very quickly! You need to keep your eyes on the road to avoid an accident. A voice UX designer would be aware of this “placeona.” They would design based on the knowledge that a driver isn’t completely hands-free or eyes-free.

When designing this interaction, a voice designer might ask themselves:

- How would a user interacting with a smartphone while driving rely on voice interaction?

- What kind of conversation would that user have with the device?

- How personable should the chat be?

- How loud should the voice be?

- Are there options for different languages?

- Should a voice interrupt the driver during heavy traffic regarding faster routes?

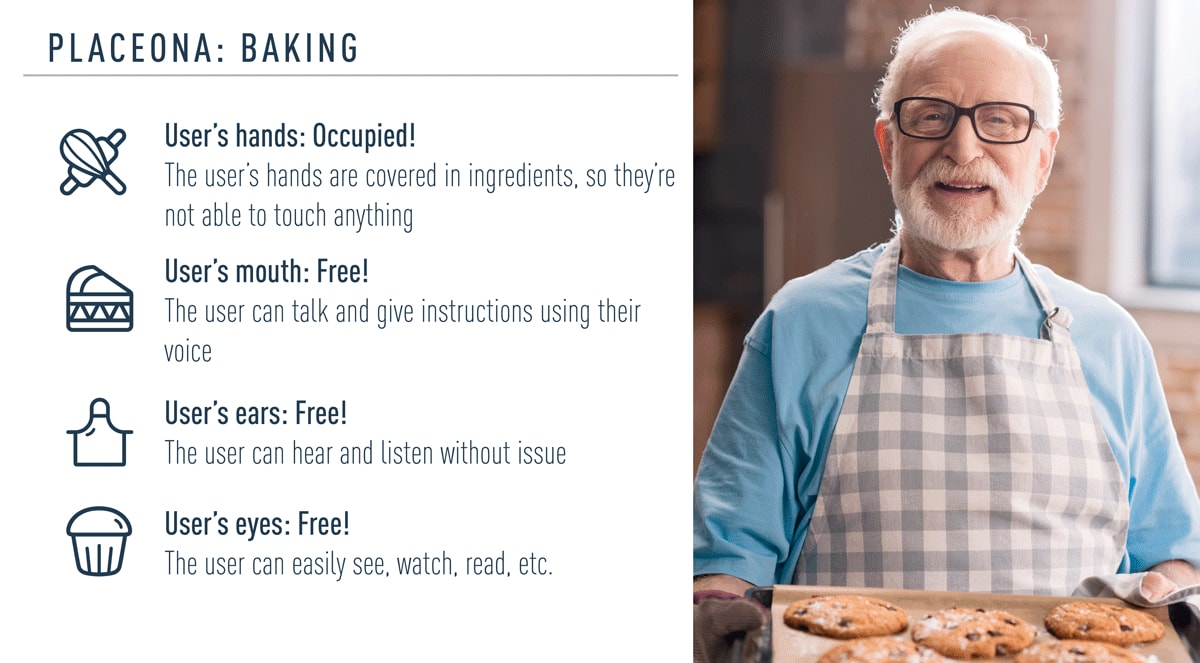

A placeona for the user scenario of baking. The user’s hands are occupied, while their mouth, ears, and eyes are free.

These questions are a vital part of the process of creating a voice persona. As mentioned, placeonas show how a location can place limits on the type of interactions that makes sense. It depends on where you are, which in turn defines what you have free to use. For example, if you’re in a classroom or learning setting, are you “hands-free, eyes and ears free, or voice restricted.” Say a user wants to learn German while driving—they are “hands and eyes busy, but ears and voice free.” While cooking, a user is “hands busy, but eyes, ears, and voice free.” This creates a multitude of possibilities for voice UX designers.

3. How to create conversations with voice devices

On-screen UX designers create wireframes or an early rendition of how a user will interact with an app across multiple screens. When designing for voice, the essential part is prototyping with sample dialogs or scripts. Writing dialogs helps to identify and fix problems, and prove that the interaction will be successful. A dialog flow (a script that shows the conversation between the user and the device) includes the following:

- Keywords that initiate the conversation with the device

- Branches that represent where the conversation could lead to (this could be infinite)

- Example scripts for both the user and the device.

On-screen UX designers use prototyping tools like InVision and Prott to test their flows. Voice UX designers use Amazon’s Alexa Skill Builder, Google’s SDK, and Sayspring to prototype their interactions. These prototyping apps are used to create these scripts.

4. The importance of voice personas

In UX design, when designing mobile apps and websites, designers have to think about what information is vital, and what information is secondary. UX designers try to employ the concept of minimalism in their work—less is more. Users don’t want to feel bewildered by content, however, they need just the right amount of information to complete their tasks.

In voice design, you have to be careful because words are all that there is to communicate with. As a result, it’s difficult to convey complex information and data. This means that fewer words are better. The script flow must stay conversational and simple to comprehend. Furthermore, confirmation of a completed task must be clear to the user. Say the user wants to turn off the bathroom light from upstairs while the user is downstairs. The user needs clarification that the task has been completed. A simple statement from the device, such as “The bathroom lights are now turned off. Goodnight Ms. Stevenson,” is not only reassuring, but an element of trust is established without visual confirmation.

Creating voice personas is essential when designing for voice-enabled devices. So, how far are we from solely relying on voice-enabled technology to complete our tasks?

As we know, these devices are far from perfect. Any comment or command can easily be misinterpreted. Factors like tone and word choice affect the user’s experience of a voice user interface. This is why creating a voice persona is vital—people tend to speak differently, and use different variations of tone and sentence structure. The more well-developed the voice persona, meaning the more we know about the user’s background, personality, age, and culture, the more we can figure out what kinds of words they will use, their mannerisms and frustrations, their pitch and tone. A well-researched and documented persona enables various voice design teams at various stages to be consistent and efficient.

So what if the user asks the interface something it can’t do? The device may simply apologize for not being able to accomplish the request. Users will understandably tend to assume that the device can accomplish any task or request. How will designers solve this ongoing problem? By extensive research, repetitive and vigorous quantitative and qualitative analysis, and an emphasis on studying placeonas, voice UX designers have only scratched the surface with voice interaction.

Bill Buxton was a specialist who studied human-computer interactions. He believed that innovation is reached by examining human social interactions. Since UX design involves a high level of empathy for the user, it makes sense that voice design follows the same principle. Although humans interact using their eyes, ears, voices, and hands, the process begins and ends with the heart.

Do you think voice interaction will completely replace on-screen interaction? How will this change the way people communicate, not only with devices but with each other?

If you’d like to learn more about voice user interface design, check out the following articles for further reading: